Subscribe to P2P-economy

Stay up to date! Get all the latest & greatest posts delivered straight to your inbox

Subscribe

P2P.org's DeFi series is especially meant for regulated institutions evaluating on-chain capital allocation. Each article addresses a specific infrastructure, governance, or compliance dimension that determines whether a DeFi allocation can clear institutional approval and operate within mandate.

This is part two of a three-part sequence on the structural gap between DeFi vault architecture and institutional requirements. Part one examined why most DeFi vaults were not built for institutional risk tolerance. Part three will explain what mandate validation at execution actually means for regulated allocators.

Previously in the series: Why Most DeFi Vaults Were Not Built for Institutional Risk Tolerance

The DeFi vault curator market has grown from $300 million to $7 billion in under a year, a 2,200% expansion that reflects genuine demand for managed on-chain rewards strategies. The protocols enabling that growth: Morpho, Aave, Euler, and others, have built infrastructure that functions at scale and increasingly attracts institutional attention.

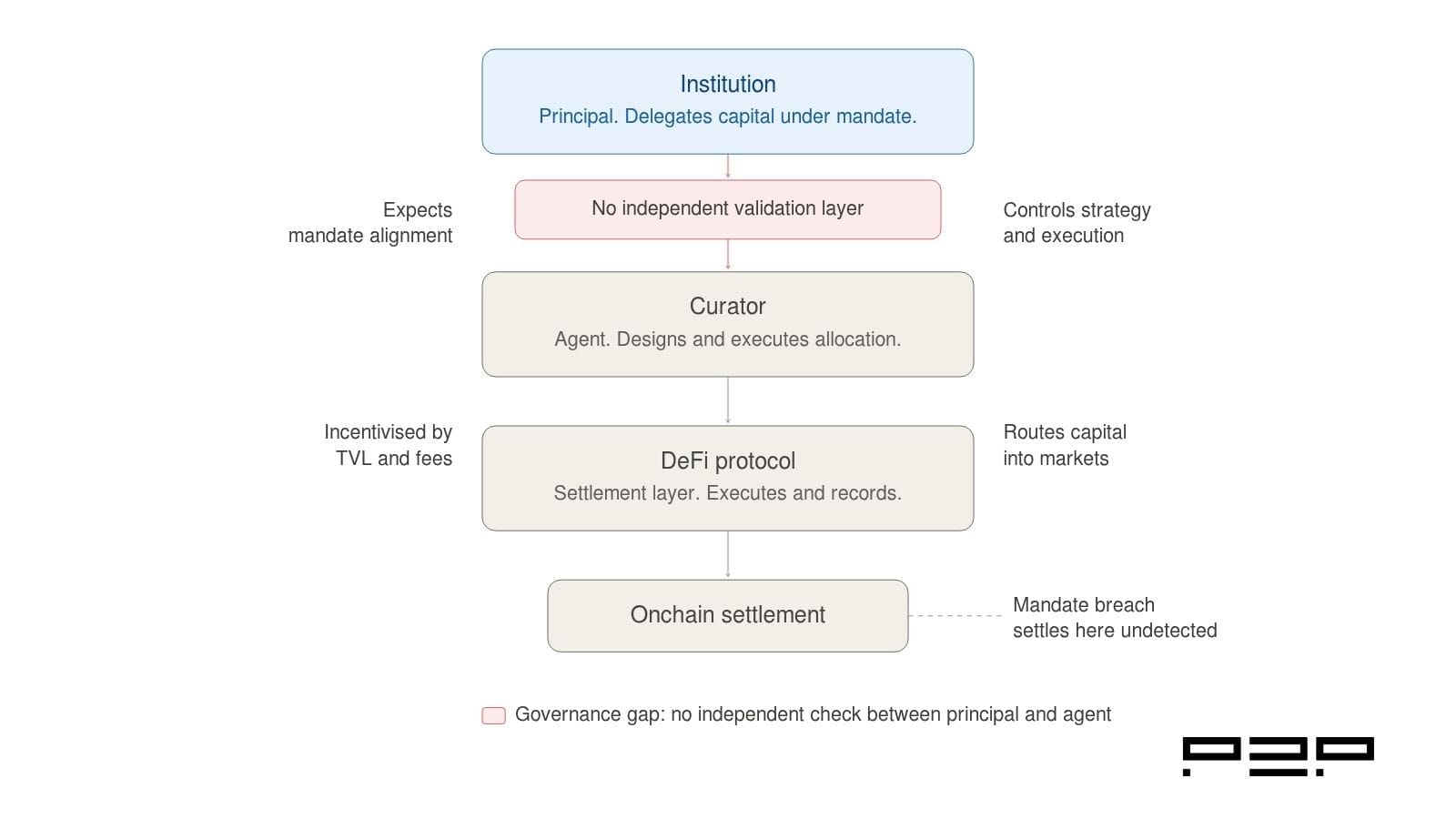

But the speed of that growth has outpaced a fundamental governance question the market has not yet answered: when a curator controls both the strategy design and its execution, with no independent validation layer between their decisions and on-chain settlement, whose interests are they actually serving?

For retail depositors, this question is manageable. They evaluate the curator's track record, accept the risk, and monitor through a dashboard. For regulated institutions, it is a structural problem with a specific name: the principal-agent problem. Unlike in traditional asset management, where regulatory frameworks, licensing requirements, and liability structures constrain the conflict, DeFi vault architecture has no equivalent mechanism. The conflict exists by design, not by accident, and understanding it is the starting point for any serious institutional evaluation of DeFi vault exposure.

Short on time? Here are the key takeaways. For the full analysis and supporting data, continue reading below.

The DeFi vault curator model creates a structural conflict of interest: curators are incentivised primarily by TVL growth and performance fees, not by alignment with any individual depositor's mandate. In a retail context, this is manageable. In an institutional context, it creates three specific problems that regulated allocators need to evaluate before committing capital.

First, curator incentives are not calibrated to mandate alignment. A curator optimising for TVL will make allocation decisions that attract more deposits, which may or may not be consistent with any individual institution's concentration limits, protocol allowlists, or risk parameters.

Second, there is no independent check between the curator's decision and on-chain settlement. In traditional delegated asset management, a compliance function or an independent operator validates decisions before they are executed. In most DeFi vault architectures, that layer does not exist. The curator decides, and the chain settles.

Third, the concentration of risk at the curator layer is now a documented systemic concern. Academic research covering six major lending systems found that a small number of curators intermediate a disproportionate share of total value locked and exhibit clustered tail risk. A late 2025 collapse of a major yield aggregation protocol, which triggered approximately $93 million in losses and a $1 billion DeFi market outflow within a week, illustrated what happens when curator-layer risk materialises without an independent protection layer in place.

The principal-agent problem is one of the foundational concepts in financial governance. It arises whenever one party (the agent) is entrusted to act in the interests of another (the principal) but has incentives that diverge from those interests. In traditional asset management, this problem is addressed through licensing requirements, fiduciary duties, contractual liability frameworks, and independent oversight structures that constrain agents' actions.

In DeFi vault architecture, the principal-agent problem is structural and largely unconstrained.

The curator's primary economic incentive is performance fees, typically earned as a percentage of yield generated or TVL managed. A curator who attracts more deposits earns more fees. A curator who generates higher apparent yields attracts more deposits. The incentive structure optimises for TVL growth and yield performance, not for mandate alignment with any individual depositor.

For a retail depositor, this misalignment is tolerable. The depositor chose the curator, understands the strategy, and accepts the risk profile. The relationship is simple: one principal, one agent, one strategy.

For a regulated institution, the misalignment is a governance problem. The institution has a mandate, documented concentration limits, protocol allowlists, and risk parameters that are not negotiable. The question is not whether the curator has a good track record. The question is whether the curator's incentive structure systematically aligns their allocation decisions with the institution's specific mandate at the point of execution. In most DeFi vault products, the honest answer is that it does not, because the architecture was never designed to make it do so.

The conflict of interest in DeFi vault design is not a matter of the curator's bad faith. Most curators are sophisticated operators with genuine risk management capabilities. The problem is structural: the architecture places curators in a position where their economic incentives and their clients' governance requirements pull in different directions, with no independent mechanism to detect or resolve the divergence.

Three specific manifestations are worth examining.

Curator managed TVL tripled from $1.69 billion to $5.55 billion in 2025 as depositors increasingly delegated allocation decisions to the curator layer. As that TVL concentration grows, curators face increasing pressure to deploy capital efficiently across available markets. An allocation decision that maximises yield across a large pool of depositor capital may breach a specific institution's concentration limit in a particular protocol or asset class. Without a pre-execution validation layer, that breach settles on-chain before anyone is notified.

The curator business model is primarily performance fee-driven. Curators are rewarded for optimising returns. They are not contractually rewarded for maintaining mandate alignment with specific depositors. These are different objectives that happen to coincide in benign market conditions and diverge in stress scenarios, precisely when mandate alignment matters most.

Today, every curator uses their own subjective risk labels: "Low", "Medium", "High", "Aggressive", with no shared definitions, no comparable metrics, and no regulatory acceptance. This fragmentation, noted in research on the curator market, means institutions cannot compare vault strategies on a like-for-like basis or verify that a strategy description accurately maps to their mandate requirements. In traditional finance, credit rating agencies apply universal, transparent ratings to enable exactly this kind of comparison. The DeFi curator market has no equivalent.

Beyond individual mandate misalignment, the growth of the curator layer has created a systemic risk dynamic that institutions should understand before allocating.

Academic research covering six major lending systems from October 2024 to November 2025, including Aave, Morpho, and Euler, found that a small set of curators intermediates a disproportionate share of system TVL and exhibits clustered tail co-movement. The researchers concluded that the main locus of risk in DeFi lending has migrated from base protocols to the curator layer, and that this shift requires a corresponding upgrade in transparency standards (Source: Institutionalizing Risk Curation in Decentralized Credit, arXiv, December 2025.).

In November 2025, a yield aggregation protocol with over $200 million in TVL experienced approximately $93 million in losses after capital was transferred to an off-chain manager without adequate independent oversight. The stablecoin it issued, which was used as collateral across multiple curator-managed vaults on Morpho, Euler, Silo, and Gearbox, depegged by over 70% within 24 hours. Within a week, the broader DeFi market saw a net outflow of approximately $1 billion.

The specific failure mode in the Stream Finance case, capital transferred off-chain by a party with unilateral control and no independent validation layer, is precisely the governance gap that the conflict of interest problem creates at scale. The curator had both the authority to make the allocation decision and the ability to execute it, with no independent check between decision and settlement.

This is not an argument against the curator model. Curators play a legitimate and valuable role in making DeFi yields accessible. It is an argument for understanding where the governance gap sits in the architecture, and for evaluating what infrastructure exists to close it before committing institutional capital.

The parallel in traditional delegated asset management is instructive.

When a regulated institution delegates capital management to a third party, the framework governing that relationship includes a defined mandate with specific investment parameters, independent compliance monitoring that validates decisions against the mandate before execution, contractual liability boundaries that separate the strategy manager from the oversight function, and regulatory requirements that constrain how the manager can act in their own interests.

None of these elements emerged organically from market dynamics. They were built, over decades, in direct response to the documented consequences of the principal-agent problem in asset management. The governance frameworks that make delegated mandate management institutionally viable in traditional finance exist because the alternative, unconstrained agent discretion, produced recurring failures.

DeFi vault architecture is at an earlier stage of that same evolutionary process. The curator model is the equivalent of delegated asset management without the governance layer. The protocols work. The curators are increasingly sophisticated. What is missing is the independent validation infrastructure that sits between the agent's decision and the principal's capital, which checks every execution against the mandate before it settles.

The conflict of interest in DeFi vault design is not a character flaw in the curator market. It is an architectural feature of a system that was built for retail capital and is now being evaluated by institutional allocators who operate under a different governance framework.

Curators are incentivised by TVL and performance fees. They are not structurally incentivised to maintain mandate alignment with individual institutional depositors. The architecture places no independent check between their decisions and on-chain settlement. And the concentration of risk at the curator layer is now a documented systemic concern, not a theoretical one.

Regulated institutions evaluating DeFi vault exposure should treat the conflict of interest question as an infrastructure evaluation, not a due diligence question about any individual curator. The question is not whether a specific curator has a strong track record. The question is whether the infrastructure governing the relationship between that curator and the institution's capital is built to validate mandate alignment at every execution point, independently of the curator's own incentive structure.

Next in this series: Mandate Validation at Execution: What It Means for Regulated Allocators (soon available)

The principal-agent problem arises when a party entrusted to act in another's interests has incentives that diverge from those interests. In DeFi vaults, the curator acts as the agent for depositors but is primarily incentivised by TVL growth and performance fees rather than by mandate alignment with any specific depositor. The architecture provides no independent mechanism to validate that curator decisions align with individual depositor mandates before those decisions settle on-chain.

Curator compensation is driven by yield performance and TVL growth. An allocation decision that maximises yield for a large depositor pool may breach a specific institution's concentration limits, protocol allowlists, or risk parameters. Without pre-execution validation, that breach settles on-chain before the institution's risk committee is notified. The curator's economic incentive to optimise for yield and TVL is structurally misaligned with the institution's governance requirement to operate within mandate at every execution point.

Academic research covering six major lending systems found that a small number of curators intermediate a disproportionate share of total value locked and exhibit clustered tail co-movement. This means that stress at the curator layer, whether from poor allocation decisions, off-chain mismanagement, or collateral depegging, can propagate across multiple protocols simultaneously. For institutions, this creates a systemic exposure that is difficult to model, monitor, or contain within standard risk frameworks. The absence of an independent validation layer between curator decisions and onchain settlement means that by the time the exposure is visible, it has already settled.

The key question is not whether a curator has a strong track record, but whether the infrastructure governing the relationship between that curator and the institution's capital is built to validate mandate alignment independently. Specifically, institutions should evaluate whether pre-execution controls exist to block transactions that breach mandate parameters before they settle, whether the compliance log produced by the vault is exportable and independently verifiable, and whether the roles of strategy curator, vault operator, and infrastructure provider are contractually separated with explicit liability boundaries. These are infrastructure questions, not due diligence questions about individual curators.

Traditional delegated asset management frameworks include a defined mandate with specific investment parameters, independent compliance monitoring that validates decisions against the mandate before execution, contractual liability boundaries separating the strategy manager from the oversight function, and regulatory requirements constraining how managers can act in their own interests. These frameworks were built in direct response to the documented consequences of unconstrained agent discretion. DeFi vault architecture is at an earlier stage of the same evolutionary process.

[P2P.org builds the protection layer that sits between regulated institutions and DeFi execution environments, independently of the curators who manage allocation strategies. If you are evaluating the infrastructure requirement for a DeFi allocation program, talk to our team.]

<p><strong>Series:</strong> Validator Playbook | Institutional Infrastructure</p><p>The Validator Playbook is <a href="http://p2p.org/?ref=p2p.org">P2P.org</a>'s operational series for infrastructure engineers, staking product managers, and validator risk committees building or evaluating institutional-grade staking programs. Each article addresses a specific operational, technical, or governance dimension of running or selecting validator infrastructure at an institutional scale.</p><p><strong>Previously in the series:</strong> <a href="https://p2p.org/economy/ethereum-slashing-explained-what-custodians-funds-exchanges-must-know/">Ethereum Slashing Explained: What Custodians, Funds and Exchanges Must Know</a></p><h2 id="learnings-for-busy-readers">Learnings for Busy Readers</h2><p><strong>What this article covers:</strong></p><ul><li>Why standard metrics like fees and uptime are insufficient for institutional due diligence</li><li>The seven dimensions that institutional validator due diligence must cover</li><li>The questions to ask at each stage and what good answers actually look like</li><li>A complete due diligence checklist for procurement and risk committee use</li></ul><p><strong>The core argument:</strong> Validator due diligence is not a yield evaluation. It is an engineering reliability assessment. The institutions that make delegation decisions on the basis of mechanisms, not marketing, consistently achieve better outcomes across uptime, slashing avoidance, and incident response.</p><h2 id="introduction">Introduction</h2><p>Most validator due diligence processes start in the wrong place. Fee schedules get compared. Uptime dashboards get reviewed. Marketing materials get forwarded to risk committees. And then a delegation decision gets made on the basis of information that does not actually describe how a validator performs when something goes wrong.</p><p>In 2026, staking is no longer a peripheral activity for institutions. The institutional staking services market reached USD 5.8 billion in 2024 and is projected to grow to USD 33.31 billion by 2033 (Source: <a href="https://coinshares.com/us/insights/knowledge/institutional-staking-on-the-rise/?ref=p2p.org">CoinShares</a>). As allocations grow and staking becomes embedded in custody platforms, treasury programs, and regulated ETF products, the validator selection decision carries consequences that extend well beyond the immediate yield impact. A validator failure is an operational incident. A slashing event is a financial loss and potentially a regulatory disclosure obligation. Getting the selection process right is not optional.</p><p>This article sets out a practical due diligence framework for institutional teams evaluating validator infrastructure. It is written for staking product managers, validator risk committees, infrastructure engineers, and procurement teams who need to go beyond the surface metrics and understand what a validator operation actually looks like under stress.</p><h2 id="why-standard-metrics-are-not-enough">Why Standard Metrics Are Not Enough</h2><p>The most commonly referenced validator metrics are commission rate, advertised APY, and uptime percentage. None of these tells you what you actually need to know.</p><p>The commission rate tells you the price. It does not tell you what the price buys, whether the fee model is sustainable, or whether the operator has the resources to invest in the infrastructure quality that protects your stake. An aggressively low fee may be attractive in the short term, but it can also signal an under-resourced operation or a commercial strategy focused on volume rather than long-term relationships. </p><p>Advertised APY is a function of network conditions, not operator quality. Two validators on the same network with identical commission rates will produce similar yields under normal conditions. The difference between them shows up during chain upgrades, periods of network congestion, and incident response.</p><p>In 2026, the highest-impact staking outcomes are determined by operational reliability, key-management decisions, and incident behaviour, not the headline APR. The most expensive failures show up during chain upgrades, congestion, correlated cloud incidents, or governance-driven parameter changes (Source: <a href="https://cryptoadventure.com/staked-review-2026-non-custodial-institutional-staking-reporting-and-tradeoffs/?ref=p2p.org">Crypto Adventure</a>).</p><p>Uptime percentage is the most misleading metric of all. A validator can show 99.9% average uptime across a reporting period while having failed catastrophically during the one critical window that mattered. A client upgrade weekend. A network fork. A period of unusual congestion. Average uptime hides the variance that institutional risk frameworks are designed to assess.</p><p>The right frame for validator due diligence is not a yield evaluation. It is an engineering reliability assessment conducted the same way a risk committee would assess any critical infrastructure vendor.</p><figure class="kg-card kg-image-card kg-card-hascaption"><img src="https://p2p.org/economy/content/images/2026/04/validator_due_diligence_seven_dimensions.jpg" class="kg-image" alt="A seven-dimensional framework for institutional validator due diligence showing infrastructure architecture, key management, slashing risk controls, change management, reporting and auditability, commercial terms and exit, and protocol coverage, with a signal of maturity for each dimension." loading="lazy" width="1600" height="900" srcset="https://p2p.org/economy/content/images/size/w600/2026/04/validator_due_diligence_seven_dimensions.jpg 600w, https://p2p.org/economy/content/images/size/w1000/2026/04/validator_due_diligence_seven_dimensions.jpg 1000w, https://p2p.org/economy/content/images/2026/04/validator_due_diligence_seven_dimensions.jpg 1600w" sizes="(min-width: 720px) 720px"><figcaption><i><em class="italic" style="white-space: pre-wrap;">The seven dimensions of institutional validator due diligence. Each row covers what the dimension includes and what a strong answer from a provider looks like.</em></i></figcaption></figure><h2 id="the-seven-dimensions-of-institutional-validator-due-diligence">The Seven Dimensions of Institutional Validator Due Diligence</h2><h3 id="1-infrastructure-architecture-and-failure-mode-analysis">1. Infrastructure Architecture and Failure Mode Analysis</h3><p>The first question is not where the infrastructure is located. It is how it's designed to fail.</p><p>Every validator infrastructure has failure modes. The relevant question is whether those failure modes are independent or correlated. A validator operation that runs all nodes in the same cloud region with the same automation pipeline and the same deployment tooling has correlated failure risk. A single incident, a regional outage, or a software bug in an automated update can take down the entire operation simultaneously.</p><p>Validator operations should be evaluated like reliability engineering. A buyer should focus on correlated failure and safe redundancy. Downtime can trigger penalties when validators fail to meet protocol participation requirements. More severe penalties can be triggered by unsafe redundancy that leads to double-signing (Source: <a href="https://cryptoadventure.com/staked-review-2026-non-custodial-institutional-staking-reporting-and-tradeoffs/?ref=p2p.org">Crypto Adventure</a>).</p><p>The architecture questions that matter:</p><ul><li>Are nodes distributed across independent infrastructure providers and geographic regions?</li><li>Are multiple consensus client implementations supported to reduce client diversity risk?</li><li>Is there active-active or active-passive failover, and how does the failover logic prevent double-signing?</li><li>What is the rollback procedure if a software update causes instability?</li><li>Does the provider operate bare metal infrastructure, cloud, or a hybrid, and how is each maintained?</li></ul><p>A mature operator can answer each of these questions with specifics. An operator competing primarily on price typically cannot.</p><h3 id="2-key-management-and-access-controls">2. Key Management and Access Controls</h3><p>Validator key management is the most consequential security dimension in any staking program. A key compromise does not always result in direct theft of assets, but it can result in slashable behaviour, validator downtime, loss of governance participation, and reputational exposure that exceeds the financial loss.</p><p>In institutional staking, not all risk lies in infrastructure. It is also critical to understand who controls what: funds, signing keys, withdrawal credentials, reward parameters, exit processes, and operational authorisations. It is therefore not enough to speak abstractly about custodial or non-custodial staking. Due diligence must break down the operational and contractual flow: what the operator does, what the client retains, what the custodian controls, and which points require joint authorisation.</p><p>The key management questions that matter:</p><ul><li>Are signing keys and withdrawal keys held in separate environments with separate access controls?</li><li>Are Hardware Security Modules (HSMs) used for signing key operations?</li><li>How is access to signing infrastructure controlled, logged, and audited?</li><li>What is the procedure for key rotation, and how is it tested?</li><li>How is double-signing prevented specifically during failover events?</li></ul><p>Institutions should request a written description of the key management architecture, not a verbal summary. The document should specify who holds what access, under what conditions access is granted, and how key operations are logged.</p><h3 id="3-slashing-risk-controls-and-incident-history">3. Slashing Risk Controls and Incident History</h3><p>Slashing is the protocol-level penalty for validator misbehaviour. The two primary causes are double-signing and prolonged inactivity. Both are largely preventable through good operational design. For a detailed breakdown of how Ethereum's slashing mechanics work at the protocol level, refer to the previous article in this series: <a href="https://p2p.org/economy/ethereum-slashing-explained-what-custodians-funds-exchanges-must-know/">Ethereum Slashing Explained: What Custodians, Funds and Exchanges Must Know</a>.</p><p>For institutional due diligence, the relevant questions are not whether slashing has occurred, but what the operator's controls are, whether those controls have been tested, and what happened in any historical incidents.</p><p>The slashing risk questions that matter:</p><ul><li>What technical controls prevent double-signing during failover events specifically?</li><li>Has the operator experienced any slashing events? What was the root cause, and what architectural changes followed?</li><li>How are slashing conditions monitored in real time?</li><li>What is the incident response procedure if a slashing risk is detected before it triggers?</li><li>What contractual coverage applies to slashing losses, and what are the specific exclusions?</li></ul><p>Be precise about slashing guarantee language. Whether slashing guarantees exist and what exclusions apply is a critical evaluation question. The due diligence question is not whether these words exist on a page, but how they map to reality: how keys are protected, how changes are approved, what happens in incident response, and what financial or contractual backstops exist (Source: <a href="https://cryptoadventure.com/stakin-review-2026-iso-27001-non-custodial-staking-the-tie-acquisition-pros-and-cons/?ref=p2p.org">Crypto Adventure</a>).</p><h3 id="4-change-management-and-protocol-upgrade-handling">4. Change Management and Protocol Upgrade Handling</h3><p>Protocol upgrades are one of the highest-risk moments in any validator operation. Client software must be updated within specific windows. Timing matters. Rollback procedures must be available. Governance decisions must be understood and acted on promptly.</p><p>Institutions that delegate to validators are, in effect, delegating the decision of how protocol upgrades are handled. That is a governance decision with direct financial consequences, and it requires explicit evaluation.</p><p>The upgrade management questions that matter:</p><ul><li>How does the operator track protocol upgrade schedules across the networks it validates?</li><li>What is the process for testing upgrades before deploying to production validators?</li><li>How are staged rollouts managed, and what triggers a rollback?</li><li>Does the operator participate in validator governance processes, and is there a documented policy?</li><li>How are clients notified of upcoming upgrades and their potential operational impact?</li></ul><h3 id="5-reporting-and-auditability">5. Reporting and Auditability</h3><p>Institutional staking programs require reward attribution at the validator level, in formats compatible with internal risk management systems and external audit requirements. A dashboard is a monitoring infrastructure. An audit trail is something different.</p><p>A buyer should request sample reporting packs that mirror internal requirements, including reward timing granularity and event classification, clear separation of principal, rewards, and fees, and chain event treatment such as redelegations or downtime penalties.</p><p>The reporting questions that matter:</p><ul><li>Can the provider deliver reward attribution at the validator level, disaggregated by epoch and by asset?</li><li>Is the reporting format compatible with internal accounting and risk management systems?</li><li>Is there an exportable, independently verifiable audit log of all validator operations, not just a dashboard?</li><li>How are chain events such as downtime penalties, redelegations, and slashing incidents logged and reported?</li><li>Can reporting be delivered in formats required for tax reporting in the institution's operating jurisdiction?</li></ul><p>On certifications: SOC 2 Type II is the most relevant independent security attestation for validator infrastructure providers. Enterprise clients typically want Type II reports because they demonstrate how controls perform in real operations, not just at a point in time (Source: <a href="https://wolfia.com/blog/soc-2-compliance-requirements-complete-guide?ref=p2p.org">Wolfia</a>). A SOC 2 Type II report covering availability and security criteria provides meaningful independent assurance that the controls governing validator uptime and key management are operating as documented. It is a floor, not a ceiling, but it is a meaningful one. <a href="http://p2p.org/?ref=p2p.org">P2P.org</a> achieved SOC 2 Type II certification in December 2025, independently validating our operational controls across security and availability criteria.</p><h3 id="6-commercial-terms-slas-and-exit-procedures">6. Commercial Terms, SLAs, and Exit Procedures</h3><p>The commercial structure of a staking relationship defines the accountability framework. Fees, SLAs, and exit procedures are not administrative details. They are the contractual expression of how risk is allocated between the institution and the provider.</p><p>SLAs should specify response times, escalation paths, penalties if uptime falls below the guarantee, and custom agreements. The question is what support is included: 24/7 monitoring, dedicated account teams, reporting, incident management, custodian integrations, contractual coverage, and contingency response capability.</p><p>The commercial terms questions that matter:</p><ul><li>What is the fee structure, and what is explicitly included vs. billed as an add-on?</li><li>Are there different tiers for standard delegation versus dedicated validator operations?</li><li>What does the SLA actually commit to, and what are the remedies if commitments are not met?</li><li>What is the procedure for migrating stake to a different provider if the relationship ends?</li><li>What would happen operationally if the provider ceased operations, and is there a documented continuity plan?</li></ul><p>It is also important to review exit processes: migration, validator changes, and orderly off-boarding. Another useful question is what would happen if the provider ceased operations tomorrow. The quality of the answer often reveals its maturity.</p><h3 id="7-protocol-coverage-and-multi-chain-operational-consistency">7. Protocol Coverage and Multi-Chain Operational Consistency</h3><p>Institutional staking programs increasingly span multiple proof-of-stake networks. Ethereum, Solana, Polkadot, Cosmos, and others each have distinct consensus mechanisms, upgrade cycles, slashing conditions, and governance processes. A provider that operates well on Ethereum may not have the same operational maturity on Solana.</p><p>The protocol coverage questions that matter:</p><ul><li>On which networks does the provider have the deepest operational track record?</li><li>Are the infrastructure, architecture, and key management controls consistent across all supported networks?</li><li>How does the provider handle networks with different upgrade cadences and governance participation requirements?</li><li>Is there chain-specific reporting available for each network in the institution's portfolio?</li><li>How many networks does the provider support, and is that breadth matched by operational depth?</li></ul><p><a href="http://p2p.org/?ref=p2p.org">P2P.org</a> operates non-custodial validator infrastructure across more than 40 proof-of-stake networks, with consistent operational standards applied across each. Our <a href="https://p2p.org/networks/solana?ref=p2p.org">Solana staking infrastructure</a> and <a href="https://p2p.org/networks/ethereum?ref=p2p.org">Ethereum staking infrastructure</a> pages describe the specific architecture and reporting capabilities for each network, and our <a href="https://docs.p2p.org/?ref=p2p.org">technical documentation</a> provides integration details for procurement and engineering teams.</p><blockquote><strong>Evaluating validator infrastructure for your institution?</strong> <a href="http://p2p.org/?ref=p2p.org">P2P.org</a> provides non-custodial staking across 40+ proof-of-stake networks with SOC 2 Type II certified operational controls, validator-level reporting, and dedicated institutional support. <a href="https://p2p.org/networks/ethereum?ref=p2p.org">Explore P2P.org Staking Infrastructure</a></blockquote><h2 id="due-diligence-checklist">Due Diligence Checklist</h2><p>For staking product managers, validator risk committees, and procurement teams conducting institutional validator due diligence. Organised by the seven dimensions covered above.</p><p><strong>Infrastructure architecture:</strong> [ ] Nodes distributed across independent infrastructure providers and geographic regions [ ] Multiple consensus client implementations supported to reduce client diversity risk [ ] Failover logic documented and specifically designed to prevent double-signing [ ] Rollback procedures exist and have been tested for software update failures [ ] Infrastructure type (bare metal, cloud, hybrid) documented with maintenance procedures</p><p><strong>Key management:</strong> [ ] Signing keys and withdrawal keys held in separate environments with separate access controls [ ] HSM or equivalent used for signing key operations [ ] Access to signing infrastructure is logged, audited, and role-based [ ] Key rotation procedures are documented and tested [ ] Double-signing prevention mechanism specifically covers failover scenarios</p><p><strong>Slashing risk controls:</strong> [ ] Technical controls against double-signing during failover are documented [ ] Slashing incident history reviewed, including root cause and architectural changes [ ] Real-time slashing condition monitoring is in place with defined alerting [ ] Incident response procedure for pre-slashing detection is documented [ ] Slashing guarantee or coverage language reviewed with specific exclusions confirmed</p><p><strong>Change management:</strong> [ ] Protocol upgrade tracking process documented for all supported networks [ ] Staged rollout and rollback procedures for software updates are in place [ ] Governance participation policy is documented [ ] Client notification process for upgrades is defined with timelines</p><p><strong>Reporting and auditability:</strong> [ ] Validator-level reward attribution available disaggregated by epoch and asset [ ] Reporting format compatible with internal accounting and risk management systems [ ] Exportable audit log of all validator operations available (not dashboard only) [ ] Chain event treatment (downtime, redelegations, slashing) is logged and reportable [ ] SOC 2 Type II report available covering security and availability criteria</p><p><strong>Commercial terms:</strong> [ ] Fee structure reviewed with explicit list of included vs. additional services [ ] SLA reviewed with specific uptime commitments and remedies confirmed [ ] Exit and migration procedure documented [ ] Operational continuity plan reviewed for provider cessation scenario</p><p><strong>Protocol coverage:</strong> [ ] Operational track record reviewed on each specific network in the institution's portfolio [ ] Infrastructure and key management controls confirmed as consistent across networks [ ] Chain-specific reporting confirmed as available for each required network [ ] Governance participation policy confirmed for each relevant network</p><h2 id="key-takeaway">Key Takeaway</h2><p>Validator due diligence is a reliability engineering assessment. The institutions that treat it as a yield comparison consistently underperform relative to those that evaluate mechanisms: how the infrastructure is designed to fail safely, how keys are managed and protected, how slashing is prevented rather than just insured against, and how the provider behaves when something goes wrong.</p><p>The seven dimensions covered in this framework are not equally weighted. Infrastructure architecture and key management are foundational. Slashing history and controls are the clearest signals of operational maturity. Reporting and audit trail capability determine whether the program can survive internal compliance scrutiny. Commercial terms and exit procedures define accountability. Protocol coverage determines whether the relationship can grow with the institution's staking program.</p><p>Evaluate each dimension with evidence, not assertions. Request documentation, ask for incident histories, and treat the quality of answers as a signal in itself.</p><h2 id="faq">FAQ</h2><p><strong>What is validator due diligence?</strong></p><p>Validator due diligence is the process of evaluating a proof-of-stake validator infrastructure provider before delegating institutional capital. It covers infrastructure architecture, key management, slashing risk controls, change management, reporting capabilities, commercial terms, and protocol coverage. It is distinct from a yield evaluation and should be conducted as a reliability engineering assessment.</p><p><strong>Why are uptime percentages insufficient for institutional due diligence?</strong></p><p>Average uptime percentages hide variance. A validator can achieve 99.9% average uptime while failing critically during the specific high-risk windows that matter most, such as client upgrades, network forks, or congestion events. Institutional risk frameworks require understanding incident behaviour and failure mode design, not average performance under normal conditions.</p><p><strong>What is the most important dimension of validator due diligence?</strong></p><p>Infrastructure architecture and key management are the foundational dimensions. Slashing history and controls are the clearest signals of operational maturity. No single dimension is sufficient on its own. A provider with excellent infrastructure but opaque key management or no documented incident response is not a complete institutional partner.</p><p><strong>What certifications should an institutional staking provider have?</strong></p><p>SOC 2 Type II is the most relevant independent security attestation for validator infrastructure providers. It independently verifies that operational controls governing uptime and security are operating as documented over a sustained period, not just at a point in time. ISO 27001 is an additional signal of information security management maturity. Certifications are a floor, not a ceiling, and should be reviewed alongside the specific controls they cover.</p><p><strong>How should institutions evaluate slashing guarantees offered by providers?</strong></p><p>Slashing guarantee language requires careful examination. The relevant questions are not whether the guarantee exists but what the specific exclusions are, what the maximum coverage is, and how the guarantee maps to the provider's actual controls. A guarantee that excludes the most likely slashing causes, such as misconfigurations during upgrades, provides limited protection. The strongest protection comes from robust anti-slashing controls, not contractual language.</p><p><strong>What should the exit and migration procedures include?</strong></p><p>Exit and migration procedures should document how stake is transferred to a new provider without exposing the institution to unnecessary downtime or slashing risk during the transition, who is responsible for each step, what the expected timeline is for each network, and what happens to accumulated rewards during the migration. Institutions should test the provider's fluency with this question during initial evaluation. A provider who cannot answer clearly has not thought through the scenario.</p><p><strong>How does validator due diligence differ across proof-of-stake networks?</strong></p><p>Each proof-of-stake network has distinct consensus mechanisms, upgrade cadences, slashing conditions, and governance processes. Validator due diligence must be conducted on a network-by-network basis, not generalised across a provider's entire portfolio. A provider with deep operational experience on Ethereum may have more limited maturity on Solana or Polkadot. Request chain-specific incident history and performance evidence for each network in the institution's staking program.</p><hr><p>[<em>Protocol-generated rewards are determined by network conditions and are variable. </em><a href="http://p2p.org/?ref=p2p.org"><em>P2P.org</em></a><em> does not control or set reward rates. Slashing risks are protocol-defined and client-borne. Operational safeguards are implemented to reduce slashing exposure, but do not eliminate protocol-level risk.]</em></p>

from p2p validator