Subscribe to P2P-economy

Stay up to date! Get all the latest & greatest posts delivered straight to your inbox

SubscribeEnjoy a second post in our comic-strip style Eli5 series covering loss of rewards, stopping baking and redelegation in Tezos blockchain.

Arthur is having dinner with Kate, a colleague from work. They get on very well and talk about a lot of things about themselves. They discover they both have the same opinion about banks - they are crypto-enthusiasts.

Arthur and Kate share their experiences with PoS tokens. They both love Tezos as an up-and-coming network.

Arthur: Who is your delegator? I have been with P2P Validator since 2018. I love their intuitive dashboard - I can see my rewards and rewards history at a glance.

Kate: Oh, I really struggle to find out my rewards. My delegator is terrible at keeping me in the loop. I discovered they didn’t pay my rewards on time or in full. It’s a real headache.

A: Be carefull, maybe in future they may not pay at all. Why not change? It's really simple.

K: Won't I lose my rewards while I’m redelegating?

A: No, your rewards from your old baker must be paid to you in full even when you stop baking completely. If you redelegate, your new baker will start to pay your rewards automatically when they are due.

K: That sounds great! How do I get started?

A: It’s easy, just follow these simple steps:

step 1: Open your wallet and select your delegating account

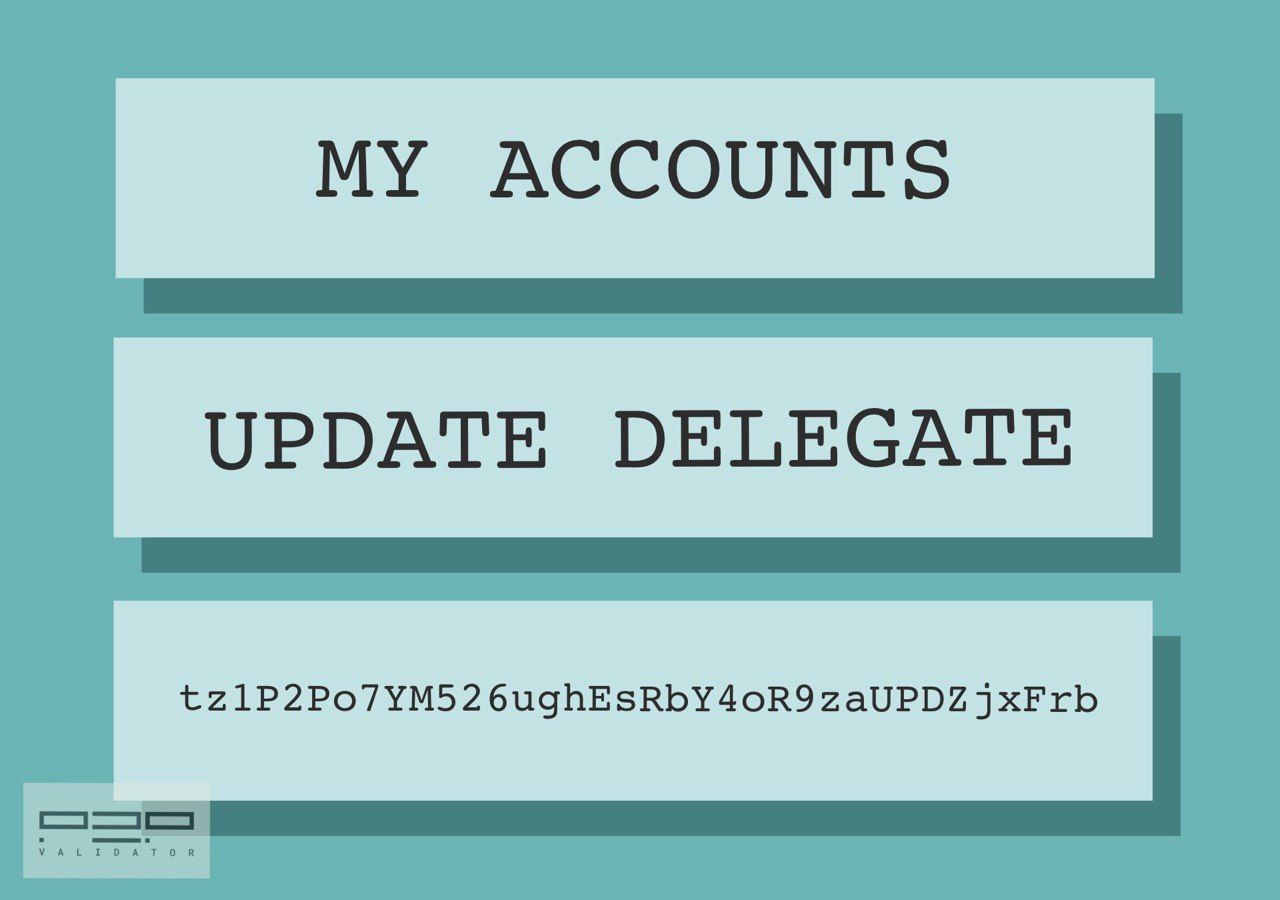

step 2: Press Delegate than Update delegate buttons

step 3: Confirm with Ledger and enter P2P Validator address, finally Confirm once more.

K: Do you recommend that I choose P2P Validator?

A: Absolutely. They are extremely efficient, have a great track record and only charge a fair validator fee.

K: You’ve sold them to me. How about coming up for a cup of coffee and help me to do it now.

A: This will be the beginning of a beautiful relationship!

In our next story you will learn about Proof-of-Stake (POS) and staking. Let us know what you think and make suggestions for topics you would like us to cover.

Let’s stake together!

Website: p2p.org

Stake XTZ with us: p2p.org/tezos

Twitter: twitter.com/p2pvalidator

Telegram: t.me/p2porg

<p>We live in a world of finance. Monetary incentives and market movements influence our daily decisions and define human lives in general. Under capitalism, the financial industry has become global expressing economic relations between people and institutions. Nowadays, existing wealth distribution mechanisms are inefficient and the economic environment is unstable. <em><em>A lack of trust become inevitable and we are moving into the new era of decentralized finance (DeFi).</em></em> Borderless, accessible and transparent interactions between participants without counterparties to transform old and inefficient financial instruments is the new paradigm of the trustless economy.</p><figure class="kg-card kg-image-card"><img src="https://p2p.org/economy/content/images/2020/09/T8DKKpE-1.png" class="kg-image" alt loading="lazy" width="2000" height="938" srcset="https://p2p.org/economy/content/images/size/w600/2020/09/T8DKKpE-1.png 600w, https://p2p.org/economy/content/images/size/w1000/2020/09/T8DKKpE-1.png 1000w, https://p2p.org/economy/content/images/size/w1600/2020/09/T8DKKpE-1.png 1600w, https://p2p.org/economy/content/images/size/w2400/2020/09/T8DKKpE-1.png 2400w" sizes="(min-width: 720px) 720px"></figure><p>Kava is the first decentralized bank in the <a href="https://p2p.org/economy/introduction-to-cosmos-economy">Cosmos ecosystem</a> bridging broad digital assets to DeFi. With interoperability in mind and solid team cross-chain expertise Kava blockchain will bring new markets to the Cosmos ecosystem providing users with the liquidity and the necessary fundamentals for DeFi applications and services.</p><h1 id="4-de-s-of-kava"><strong>4 "De-s" of Kava</strong></h1><p><strong><strong>1) De-centralized lending solution.</strong></strong> Any person can take a loan in a stablecoin collateralized with crypto assets. There is no third party involved and each person is self-responsible for managing debt and lends to himself. This allows people to release a portion of stacked liquidity from passively held digital assets and spend it on whatever they want.</p><p><strong><strong>2) De-centralized margin trading.</strong></strong> When users put crypto collateral to take a loan they can use it for doubling-down their long position in a particular asset. In this case, if the collateral value of the asset increases the user may get a higher profit.</p><p><strong><strong>3) De-centralized stablecoin.</strong></strong> Kava platform provides a mechanism to issue a stable digital currency through collateralized debt position (CDP). Stable digital currency maintains <code>1:1 peg</code> to <code>USD</code> playing role of a hedge against a bear market or a true mean of exchange without explicit volatility. It allows decentralized stablecoin to take part in broader financial applications like decentralized exchanges and financial marketplaces, creating a wide space for DeFi development.</p><p><strong><strong>4) De-centralized governance and ownership.</strong></strong> The most exciting part is that everyone can participate in the project's evolution and own a share of the network. All participants who stake native token <code>KAVA</code> will be rewarded for effective governance depending on transaction fees, total supply emission and repaid loan fees.</p><p>Cosmos ecosystem is designed with existing assets like <code>BTC</code> or <code>XRP</code> in mind. If we assume that just <code>1%</code> of <code>BTC</code> will take part in DeFi it will result in more than <code>1,5 billion USD</code> value injection also adding to a scarcity of <code>BTC</code> positively influencing the cryptocurrency market in general.</p><hr><p><em><em>In the next chapters we will dive deeper into details of Kava platform and cover staking benefits, CDP, existing risks associated with staking and other important topics.</em></em></p><hr><p><strong><strong>P2P Validator</strong></strong> offers high-quality staking facilities and provides up to date information for educational purposes. Stay tuned for updates and new blog posts.</p><p><strong><strong>Web:</strong></strong><a href="https://p2p.org/?ref=p2p.org"> https://p2p.org</a></p><p><strong><strong>Twitter:</strong></strong><a href="https://twitter.com/p2pvalidator?ref=p2p.org"> @p2pvalidator</a></p><p><strong><strong>Telegram:</strong></strong><a href="https://t.me/p2pvalidator?ref=p2p.org"> https://t.me/p2pvalidator</a></p>

from p2p validator

<h1 id="introduction"><strong>Introduction</strong></h1><p>Since the development of the first blockchain database named “Bitcoin”, complex transaction behaviour was a “Holy Grail” for people wondering how they could pay, bet, play, and even order pizza with such assets.</p><p>The first complex transaction logic implementation was made available right in “Bitcoin” with a stack virtual machine providing a limited set of operations for the end-user to make some fun with it. Fine example is an Omni-layer built on top of the operations set, which end-user intention is to provide a creation and usage of the custom user-defined assets. Such a system successfully fulfilled contemporary requirements for liquid asset transfer. Unfortunately, such an application logic usage rapidly overflowed the throughput available, so no mass adoption happened.</p><p>Another attempt to provide the customizable complex transaction behaviour was made with creation of “Ethereum”, which provided some created from scratch programming language called “Solidity” for creation of even more complex application logic, hoping it would not overflow the database throughput. Obviously this lead to another failure. Primal language and naive database architecture understanding did not survive the reality check - in 2017 the protocol was literally down with CryptoKitties hype.</p><p>The scalability troubles got up again, so another popular solution was rapidly proposed. It’s name was EOS. The solution was to split the computable transaction complex behaviour and to process it with the set of cluster nodes, which were called “Block producers”. This lead to the entrustment of an enourmous responsibility to these “Block producers”. They were now not only about data storage providers, but also computation providers. Now these guys not only store and process your data, but they even define the way your transaction behaves itself, define if they allow such a transaction to be written or not. Futhermore, such an “improvement” lead to the unacceptable database node hardware requirements, which made the support truly awful. Moreover such a split was not enough for building production-ready applications - who would like to find out if the upvote transaction, which was even payed for, was at first queued and then rejected?</p><h2 id="you-have-something-to-propose"><strong>You have something to propose?</strong></h2><p>Yeap. The following blog post series is about the CyberWay (<a href="https://cyberway.io/?ref=p2p.org">https://cyberway.io</a>) - improved and refactored EOS fork.</p><h1 id="proposal"><strong>Proposal</strong></h1><p>So what is CyberWay particularily about?</p><h2 id="eos-compatibility"><strong>EOS-compatibility</strong></h2><p>First of all the backward compatibility is held. The code contains most of the tolerable EOS parts, but excludes the awful ones. So-called “Smart Contracts” API backward compatiblity is held too, but the insides have changed. That means every EOS application could easily become the CyberWay-based one and vice versa. Enough of that. Next.</p><h2 id="bandwidth"><strong>Bandwidth</strong></h2><p>EOS’s bandwidth distribution is closely related to the amount of asset the particular user owns. Furthermore, it requires for the user to hold the asset to be available for the usage at any time. That means the asset becomes a highly valuable, but also it becomes the non-available for the newcomers one. So no newcoming applications are welcomed to be built with EOS.</p><p>Striving to eliminate these inconveniences Cyberway introduces some changes.</p><p>The bandwitdh accounting is split to the couple of categories:</p><ol><li>Priority-based bandwidth allows a user to get required computational facilities according to the amount of core-asset available.</li><li>Shared bandwidth supplies users with the unused computational power according to the particular user activity.</li></ol><h2 id="state-storage"><strong>State Storage</strong></h2><p>EOS’s state storage is extremely unreliable and does not ensures that data is saved and restored after restart correctly. Futhermore EOS does not provide any convenient API, but supposes the data structure stored inside would be complex.</p><p>CyberWay solves these troubles. CyberWay uses the external DBMS for the state storage, which means the particular developer favourite query language can be used and the external well-designed replication and clusterisation mechanisms, done by real engineers and scientists, are also about to reduce the hardware costs and make life easier.</p><h2 id="event-engine"><strong>Event Engine</strong></h2><p>Because of the storage internals being factored out the separate service, the additional transaction contents-based event engine implementation is required. It is now impossible to alert the CyberWay executable from the various database if something happened or not, just like it was in EOS. Monitoring-purposed event engine, implemented as a part of updateable application, takes back the ability to track changes coming with every transaction, even if the data storage is completely outside.</p><h2 id="virtualization"><strong>Virtualization</strong></h2><p>Just like EOS, CyberWay requires for the transaction behaviour to be updated easier, than updating the whole cluster software. That is why the WebAssembly engine is used for the virtualization purposes and with C++ as primary language for the application development.</p><h1 id="separation"><strong>Separation</strong></h1><p>Why don’t just patch EOS?</p><p>Several troubles are about the data itself, and not the code:</p><ol><li>EOS’s architecture made the memory quant an expensive one: according to the <a href="https://eosrp.io/?ref=p2p.org">https://eosrp.io</a> the cost of such a memory quant fluctuates from \$0.2 to \$0.5. That means any transaction-intensive application (e.g. some social applications) with even a quite small amount of active users (e.g. 2000-3000) would take at least 400MB per week, which would cost up to \$200,000.</li></ol><p>EOS’s custom transaction behaviour is stored inside the huge hash-table allocated over a shared memory and the access is provided with an interface, based on quite sofisiticated executable logic, which also costs.</p><p>The obvious solution - to make a cache service and process the data all inside it - is also quite a task because:</p><ol><li>The so-called “Constitution” of EOS defines the largest time interval available for the unused data to be stored with the same ownership as 3 years. This is quite unacceptable with some kind of applications (e.g. social ones) demanding data availability from the very beggining, but the changes are hard to make because lots of other application types are perfetcly fine with this.</li><li>EOS is made to produce replication packages as fast as it can - about half of a second. Such a frequency is fine for marketing purposes, but it significally reduces the complexity of custom transaction logic. This is also unacceptable.</li><li>Reduced amount of validators - only 21, and no significant increase is expected because of EOS protocol restrictions.</li><li>Censorship availability for validators implemented right in the protocol core.</li></ol><h1 id="applications"><strong>Applications</strong></h1><p>Applications are welcomed to use the following.</p><h2 id="shared-bandwidth"><strong>Shared Bandwidth</strong></h2><p>Shared bandwidth sets a limit for the user activity based on its’ staked asset amount, but no less than some basic threshold. This is required to prevent spam to database from the newcomers, and redistribute more computational resources to the succesful application developers.</p><p>Shared bandwidth is accounted separately for the network, RAM and CPU usage.</p><p>Coming to accounting - this is done with particular application bandwidth balance, which shares the convenient part for the user performing the transaction. That is why this is called “Shared” bandwidth. The application is a multisignature account, which requires at least one additional signature from the particular user, for its’ bandiwidth to be used.</p><p>This type of bandwidth allows CyberWay to provide applications with free on-boarding of users at early stages via CyberWay Acceleration Program. Later successful application could get CYBER tokens within Acceleration Program from special fund.</p><h2 id="priority-based-bandwidth"><strong>Priority-Based Bandwidth</strong></h2><p>Priority-based bandwidth is required for the user to surely write the transaction. It is formed with the amount of core asset staked by the particular user and guarantees the transaction gets written right at next replication time. The whole amount of staked core asset forms the bandwidth market.</p><p>Each account gets a share from the whole bandwidth market according to the amount of core asset the account has staked. Considering the case some user owned and staked the significant part of the whole bandwidth supply means the reduction of the resources available for other users. This is definitely not something requiring applications want.</p><p>That is why CyberWay introduces the prioritization of the bandwidth. That means the bandwidth gets split to a couple of categories:</p><ol><li>Guaranteed bandwidth, which works exactly as EOS’s one.</li><li>Priority bandwidth, which is defined according to the particular account priority.</li></ol><p>How do account earn the priority?</p><p>There are couple of ways:</p><ol><li>Perform less transactions using the currently available guaranteed bandwidth. The priority lowers as more transactions gets put inside with a single user.</li><li>Stake more core asset.</li></ol><p>The guaranteed/prioritized bandwidth split ratio is set by the cluster validators.</p><h2 id="memory-rent"><strong>Memory Rent</strong></h2><p>Cluster RAM is something the applications require to work. In contrast to EOS, CyberWay supposes the RAM to be rented from so-called block producers, but not to be owned. The rules are the following:</p><ul><li>Every block producer sets a price for 1Kb memory per month. The price begins from the median price value across all block producers.</li><li>Users place their orders for some particular memory amount rent per month.</li><li>The order is recognized as emplaced for a week, after that it gets evaluated in case the cluster-wide demanded memory is lower than the amount of proposed one.</li><li>In case the proposed amount of memory is lower than demanded, proposed memory gets auctioned.</li></ul><p>In case the memory rent time is up, but there is still some user data stored inside, the archive operation is introduced. Block producers are in charge of initiating such an archivation and the restore is available for the user for the price median-valued among block producers.</p><h2 id="dbms-based-state-storage"><strong>DBMS-based State Storage</strong></h2><p>Inspite of existing so-called “blockchain” databases, CyberWay does not intend to implement the database management software and uses the external DBMS as a state storage for more reliability. For now, only MongoDB is available, but in case of requirements, more are coming. Such a configuration considered to be troublesome for managing, but more reliable in long term.</p><p>Embedded state storage is also available in CyberWay. RocksDB is used for the in-memory and in-daemon storage management component that is faster than MongoDB.</p><h2 id="event-engine-1"><strong>Event Engine</strong></h2><p>As the state storage engine is incapsulated and factored out of the controller daemon, the event engine is implemented as a helper application, syncronizing and managing the data in external storages.</p><p>The input of such an application is a transaction set, each of which gets registered as “processed” and only after this the data are unpacked to state storage.</p><p>Such an approach allows to make sure the routine data operations are processed as required and to split the data managing daemon to single-responsibility micro-services.</p><h1 id="domain-names"><strong>Domain Names</strong></h1><p>Every created account is not identified with a key as other databases do, but it gets a unique 8 byte identifier encoded in base32. Also a human-readable 63 byte length unique names are available for the assignment for every user. In case of the amount of such names is greater than one, it gets charged and called a “Domain Name”.</p><p>Every domain name can be auctioned from base protocol or created by owner of a lower-level domain name. Domain names are transferable and reassignable. Therefore, a need for conversion between a domain name and account identifier gets satisfied with a newly introduced sufficient mechanism as much as need for domain transactions. Domain transactions are transactions which get applied to the data only related to the particular domain-name/application.</p><h1 id="protocol-properties"><strong>Protocol Properties</strong></h1><p>Protocol properties are also got changed comparing to EOS’s ones.</p><h2 id="block-generation"><strong>Block Generation</strong></h2><p>First of all, block generation time is increased for achieving more stable node replication. EOS’s 0.5 second block replication time is fine for most application in case of all the nodes are located in the same datacenter. But for truly distributed protocol, this requires to be increased due to increased network latency. CyberWay supposes the block replication time to be 3 seconds.</p><h2 id="block-producers"><strong>Block Producers</strong></h2><p>Block producers are the key members of a protocol. They keep the database safe and consistent and get rewarded for that.</p><p>Inspite of EOS’s 21 default block producers, in CyberWay the number of block producers is to be increased up to 101 in the future. This is required for more decentralization to be achieved.</p><h2 id="consensus-algorithm"><strong>Consensus Algorithm</strong></h2><p>CyberWay consensus algorithm is heavily inspired by Tezos’ and Cosmos’ one. So, active users are rewarded for voting and non-active users are punished for not voting.</p><p>Every account is allowed to vote for several validators with staked tokens.</p><p>Block producer’s weigh is determined as follows: w = m / sqrt(S), where m is a number of votes for any particular candidate, S is a total number of votes for any particular candidate (or number of stakes tokens as 1 vote is 1 token)</p><p>A particular block producer receives a reward from the emission and redistributes a share of it among his supporters. In case of misbehavior, e.g. a block omission, the block producer as well as his supporters are fined. The staked tokens are burned. This novelty makes block producers more responsible, and voters more careful and thoughtful.</p><p>The block producers get a share of emission. The share depends on the total amount of staked tokens. The more tokens are staked, the less inflation is. Thus, the CyberWay has in-built incentives for users to participate in governance via voting. Moreover, the passive users are diluted as they do not get any rewards from validators.</p><p>What if some user considers another user to understand better, which block producer is the best service provider? This gets covered by CyberWay with a proxy mechanism which ensures that some user could delegate his own assets to another user called “Proxy”. The proxy user gets fees for its service.</p><h2 id="censorship"><strong>Censorship</strong></h2><p>In contrast to EOS, CyberWay completely removes any inequality between the users. There are no privileged accounts, no so-called “Constitution”, no blacklists.</p><h2 id="workers"><strong>Workers</strong></h2><p>Workers are the mechanism first introducted in BitShares. These are users, who get their issuance share for making improvements for the protocol. The improvement can be registered and referenced by any user, particular improvement to resolve is selected via voting by validators.</p><h1 id="conslusion"><strong>Conslusion</strong></h1><p>CyberWay is one more fork of EOS, specified to handle more complex applications with more decentralization available. Workers are considered to be the most powerful tool for decentralized protocol improvements. The scalability and performance CyberWay introduces is fine enough for running complex social applications or financial service apllications or gaming applications. The absence of censorship and priveledged accounts makes CyberWay even more decentralized, which is coming in the next blog post.</p>

from p2p validator